OpenAI has revealed a comprehensive set of new safety measures designed to govern how its advanced generative AI systems are trained, deployed, and monitored. These “layered protections” are intended to minimize risks such as misuse, hallucinations, and unintended harmful outcomes while preserving rapid innovation momentum. The update underscores how seriously AI leaders now take ethical guardrails as both research and commercial applications scale dramatically.

Stronger AI safeguards and accountability are now core to competitive business strategy

These protections include enhanced monitoring of model outputs, stricter access controls for sensitive use cases, periodic safety audits, and improved incident response protocols. As AI models become more powerful and integrated into business workflows, safety and accountability have moved from academic concerns to central pillars of competitive strategy especially for commercial platforms powering productivity, search, and enterprise automation.

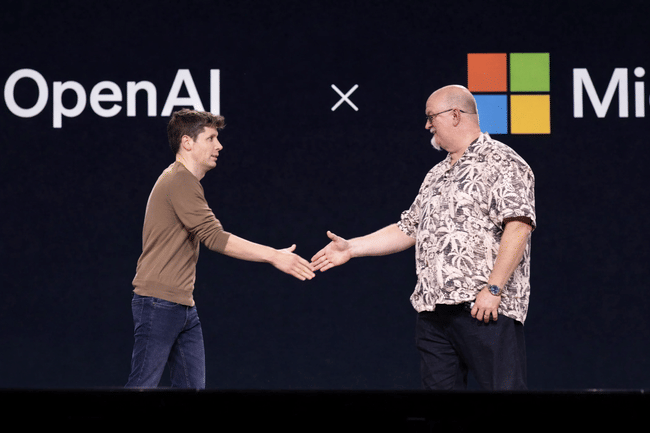

Microsoft’s strategic AI investment deepens with broader implications

OpenAI’s safety initiatives arrive in a backdrop of an evolving and deepening partnership with Microsoft. Since Microsoft made a multibillion-dollar investment in OpenAI and integrated AI models into its Azure cloud business, the alliance has helped accelerate both product innovation and enterprise adoption. Microsoft’s Azure OpenAI Service has become a cornerstone of its push to deliver AI-enhanced applications, tools, and services to corporate clients worldwide.

The partnership is synergistic: OpenAI benefits from Microsoft’s infrastructure scale and go-to-market reach, while Microsoft gains exclusive advantages in deploying cutting-edge models across productivity, security, and developer toolchains. The Microsoft-OpenAI relationship has reshaped the competitive landscape, raising the bar on what enterprise AI capabilities look like at scale.

Safety as a competitive differentiator in the AI era

While generative AI remains one of technology’s most powerful growth trajectories, safety concerns have also escalated from misinformation to model abuse and unintended societal impacts. OpenAI’s layered protections signal to enterprise clients, regulators, and the public that responsible AI deployment is not an afterthought. In doing so, OpenAI sets a benchmark for accountability in a space where ethical lapses can trigger regulatory scrutiny and brand risk.

This dynamic also creates competitive differentiation as companies that can demonstrate robust safety frameworks may earn stronger adoption among risk-averse enterprises. Microsoft in particular leverages this by embedding OpenAI’s safeguards into Azure’s enterprise compliance protocols and security posture.

Market reaction: elevated expectations for enterprise readiness

The broader market has reacted to recent developments with increased optimism about the commercial viability of advanced AI solutions. Financial markets are interpreting the heightened focus on safety as a signal that enterprise adoption may accelerate, particularly among Fortune 500 companies that prioritize governance, privacy, and compliance. Microsoft’s stock performance has reflected this narrative, benefiting from both Azure growth and its strategic alignment with the evolution of generative AI offerings.

Investors are also paying attention to how safety protocols can mitigate downside risk for long-term AI deployment, which in turn may bolster confidence in subscription-based revenue models tied to Azure and AI service usage.

Regulatory context adds urgency to safety commitments

Generative AI companies are operating under growing regulatory scrutiny globally, with governments and standards bodies pushing for clearer guardrails around data usage, explainability, and systemic risk. OpenAI’s disclosure of its layered protections may preemptively address some regulatory concerns by demonstrating transparency and proactive governance. As the European Union and U.S. regulators continue to debate AI policy frameworks, companies with robust safety postures may enjoy smoother market access and reduced exposure to punitive enforcement actions.

This regulatory backdrop influences strategic positioning for both OpenAI and Microsoft as they expand into global markets.

Microsoft’s AI service expansion amplifies the ecosystem effect

$MSFT has integrated OpenAI models across a spectrum of products including Office 365 Copilot, Dynamics 365 AI, and GitHub Copilot, driving stickiness among enterprise customers. This broad footprint amplifies the impact of OpenAI’s safety enhancements because the models underpinning these services now benefit from higher levels of oversight and governance. As businesses increasingly look to embed AI into mission-critical workflows, trust and reliability become decisive purchasing criteria.

The scaling of AI services across productivity and business process automation strengthens Microsoft’s recurring revenue base and positions it well against competitors scrambling to build comparable enterprise AI suites.

Investor implications: growth with guardrails

For investors, the twin narratives of innovation and safety governance create an intriguing risk-reward profile. On the one hand, the market opportunity for AI services is monumental, with enterprises budgeting for AI-enabled transformation and cloud providers capturing infrastructure and service revenue. On the other hand, governance failures could trigger backlash, regulatory clampdowns, or client hesitancy.

OpenAI’s layered protections help de-risk portions of this equation, enhancing confidence in the durability of AI deployments a favorable signal for long-term investors and allocators interested in tech disruption tempered by responsibility.

Looking forward: AI strategy, execution, and collaboration

As the AI landscape evolves, OpenAI and Microsoft’s alignment combining world-class model research with enterprise-ready infrastructure and safety protocols positions them at the forefront of commercial AI adoption. Traders and longer-term holders alike will monitor execution on safety commitments, integration depth across Microsoft’s product suite, and emerging policies that could shape competitive dynamics. Together, these developments suggest that the AI era is shifting from proof of concept to governed, scalable deployment and the companies that master both innovation and risk management are poised to lead the next chapter of technology growth.